By Dan Moren

June 29, 2017 2:33 PM PT

A couple weeks with the iOS 11 beta

Note: This story has not been updated for several years.

Every year we do the dance: Apple releases betas of the new versions of its operating systems and we go ahead and install them. Why, when we tell all the rest of you to be careful? Well, in part because we’re the kind of people who live to be on the bleeding edge, but also so we can write about the new versions of macOS and iOS and tell you what to expect. We do it for you, readers.

So I’ve been using the beta of iOS 11 for a couple weeks now on my 10.5-inch iPad. (I was going to wait until the public beta, I really was, but this machine simply cries out for iOS 11’s powerful features.) In that time, I’ve tried to spend a lot more time using my iPad than I used to, even if it’s still not my main computing device.

For me, iOS 11 makes that a lot more plausible than it used to be. Continuing in the tradition of iOS 9, which finally opened up the ability to have more than one app onscreen at the same time, iOS 11 has refined that into a system that is far more powerful, even if it’s not without its idiosyncrasies.

So, with the understanding that this is still a beta, and, of course, betas are subject not only to bugginess, but also to change and refinement, here are a few observations from my time using iOS 11.

Multitasking

Let’s start with multitasking, because it’s the big one. The previous model included two specific ways to get multiple apps onscreen at the same time: the first was Slide Over, in which you could simply slide a pane containing an iPad app over the right-hand side of the screen. The second was Split Screen, where you could actually bring some (not all) apps onscreen as a separate pane, shrinking both apps to smaller sizes.

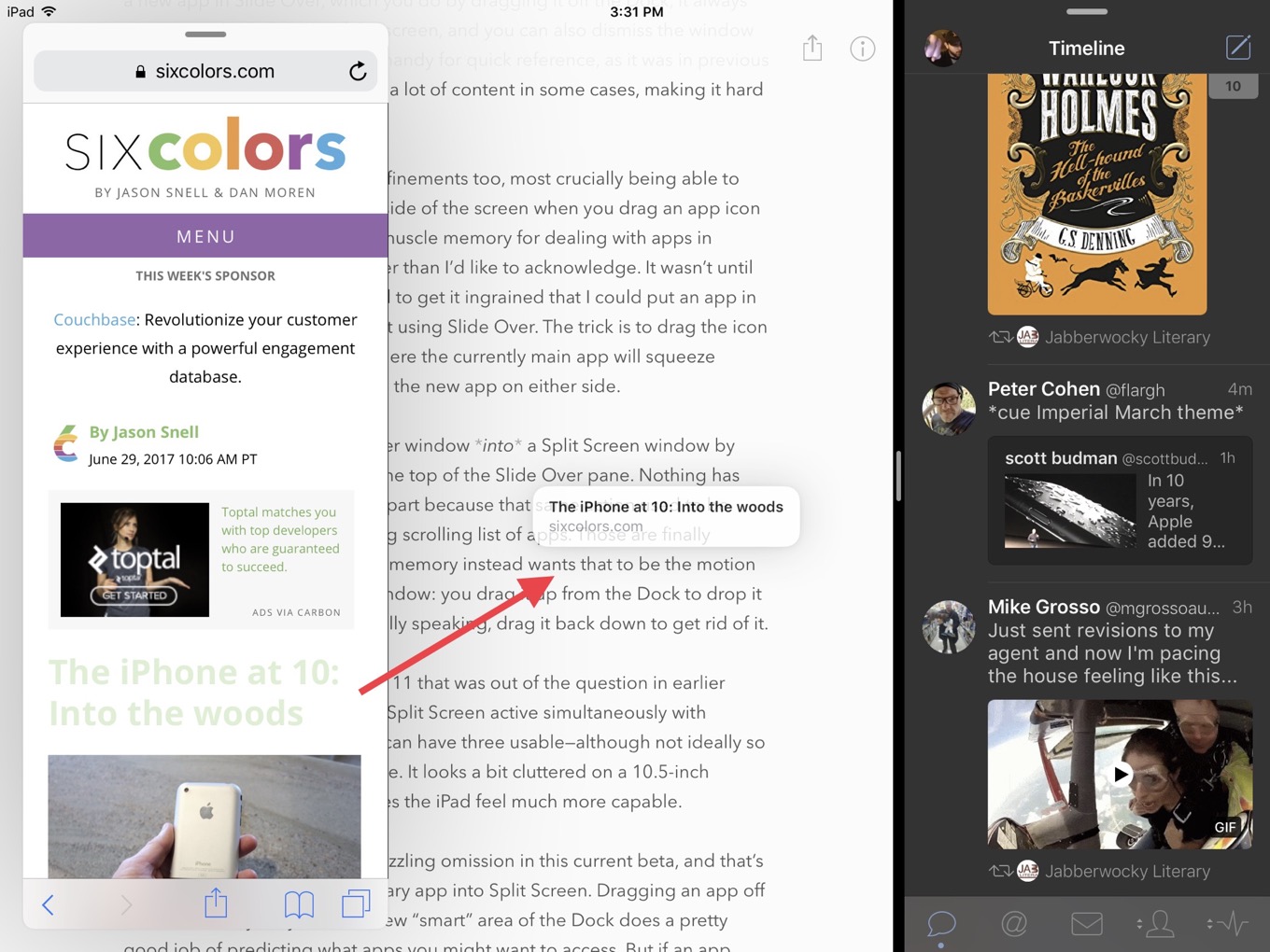

Slide Over has been completely reworked in iOS 11. Rather than simply being a pane that slides out from the right side of the screen, it’s turned into a hovering window that can be moved to either the right or left side of the screen, though it snaps to either side—it can’t be positioned arbitrarily, like a Mac window. This makes it easier to have an app floating but not constantly blocking the same content. It’s still a bit particular, though: when you summon a new app in Slide Over, which you do by dragging it off the Dock, it always spawns on the right side of the screen, and you can only dismiss the window by sliding it off to the right. It’s handy for quick reference, as it was in previous iOS editions, but it still obscures a lot of the display, making it less than ideal for working in two apps simultaneously.

Split Screen has gotten some refinements too, most crucially being able to drop apps on the right or left side of the screen, which you now do by dragging an app icon out of the Dock. Changing the muscle memory for dealing with apps in multitasking has taken me longer than I’d like to admit. It wasn’t until fairly recently that I really started to get it ingrained that I could put an app into Split Screen mode without first dropping it onscreen as a Slide Over window. The trick is to drag the icon to the edges of the screen, where the currently main app will squeeze slightly to indicate you can drop the new app the side.

You can also convert a Slide Over window into a Split Screen window by pulling down on the handle at the top of the Slide Over pane. Nothing has taken me longer to adjust to, in part because that same action used to be what brought up iOS 9/10’s frustrating scrolling list of apps. Those are finally eliminated here, but my muscle memory instead wants that to be the motion for dismissing a Slide Over window: you drag it up from the Dock to drop it on screen, so to my mind, it would be logical to drag it back down to get rid of it.

But one thing you can do in iOS 11 that was out of the question in earlier versions is have Slide Over and Split Screen active simultaneously with different apps. That means you can have three usable apps onscreen at the same time. It looks a bit cluttered on a 10.5-inch screen, but it works and it makes the iPad feel much more capable. There’s something refreshing about this: it feels inelegant, but it also feels like finally the OS is getting out of the way to let me do something, even if it’s inadvisable. To me it seems like a philosophical change for Apple: rather than being told that this isn’t the way to do something, the OS is simply throwing up its hands and saying “You want it? Have at it!”

However, there’s still one big puzzling omission in multitasking in the current beta, and that’s how difficult it is to get an arbitrary app into Split Screen. Dragging an app off the Dock is very easy, and the new “smart” area of the Dock does a pretty good job of predicting what apps you might want to access. But if an app doesn’t show up in the Dock, you still end up either having to scroll back through the thumbnails in the Mission Control-style multitasking interface or go back to the Home screen and launching it from there or Spotlight. A simple search bar in the multitasking view would go a long way to making this easier.

Drag and drop

I hesitate to even write about drag and drop yet, because without third-party adoption, we’re only getting part of the story. But where it works, it’s pretty cool. Dragging a picture from a web page into an email message makes life easier on several fronts, and as more and more third-party apps embrace these new features, it will become even more powerful. Even just dragging URLs between two Safari tabs is super handy.

If there’s one thing that’s poised to be a bit tricky in acclimating users to drag and drop, it’s that Apple has slightly overloaded the “tap and hold” mechanic. In the beta, drag and drop seems to take precedence when you tap and hold on something—you have to keep holding in order to bring up a contextual menu.

Meanwhile, the iPhone doesn’t seem to get the same drag-and-drop features, but it does have 3D Touch, which means that there are a few different mechanics going on between the iPhone and the iPad. I think we could be seeing a fundamental fork here between the tablet and the phone; it’ll be interesting to see how it develops.

Files

Like drag and drop, Files is something that promises a lot more functionality in the future, after third parties sign on. Currently, you can see other providers like Microsoft and Google in the app, but they’re using the old style API which isn’t quite as seamless an experience as Apple has showed off. It remains to be seen if all of them will sign on: Dropbox in particular never fully implemented the previous version of the storage API. Maybe the company worries that if all of your Dropbox files are available in Apple’s app you’ll never use the Dropbox app anymore. (Personally, I think that’s a little silly: the attraction of Dropbox is the service, not the app.) Update: Dropbox said in a post earlier this month that it does intend to support the new API for Files. Hurrah!

Screenshots/screen recording

Perhaps nothing will have such a big impact on my life as iOS 11’s new Screenshot features. For a few reasons, really: first, I take a lot of screenshots. Like a whole lot. Scroll back through my Camera Roll, and you’ll skim through hundreds if not thousands of shots of apps, user interfaces, and so on. For the first time since the introduction of the screenshot feature1, Apple’s improved the workflow of taking screenshots and built in an interface for easily marking them up. This is a phenomenal improvement that won’t necessarily be a big deal for everybody, but developers, designers, and tech journalists are going to love it.

Speaking of which, Apple has also for the first time made screen recording a built-in feature of iOS, accessible right from Control Center. I suspect the feature was added primarily to make it easy for developers to record video demos of their apps for the App Store. But it’s also a boon to not only those of us in the tech journalism feature who occasionally want to document how features work, but to anybody who ever has wanted to send a video explaining how to do something to their less tech savvy friends or relatives. (Previously you could record the iPhone screen by plugging it into your Mac and using QuickTime Player—this is far more convenient.)

Notes

It’s been a long road, but Apple’s transformed its Notes app from a Marker Felt monstrosity into one of the most capable apps in its arsenal. The addition of easy drawing in iOS 11 is a huge boon, and one of the reasons I went and bought a Pencil. The ability to tap the Pencil to the lock screen and just start writing is a little wacky, but kind of cool—it’s akin to being able to quickly swipe to the Camera app from the lock screen.

More to come

There’s way, way too much in iOS 11 to talk about it all here, and this is hardly intended to be a thorough review. We’re going to be living with the iOS 11 betas for the next couple months, and there are sure to be tweaks along the way. Right now, it feels very promising, and if it shipped tomorrow, then my advice would be the same as it ever is for iOS updates: you’re going to install this. But the benefit of the beta experience means there is a chance to make your voice heard and for Apple to spend some time listening to what users and developers are looking for.

- Remember that the iPhone didn’t originally have a screenshot feature available to users? Yeah, that was a tough time. ↩

[Dan Moren is the East Coast Bureau Chief of Six Colors, as well as an author, podcaster, and two-time Jeopardy! champion. You can find him on Mastodon at @dmoren@zeppelin.flights or reach him by email at dan@sixcolors.com. His next novel, the sci-fi adventure Eternity's Tomb, will be released in November 2026.]

If you appreciate articles like this one, support us by becoming a Six Colors subscriber. Subscribers get access to an exclusive podcast, members-only stories, and a special community.